Abstract

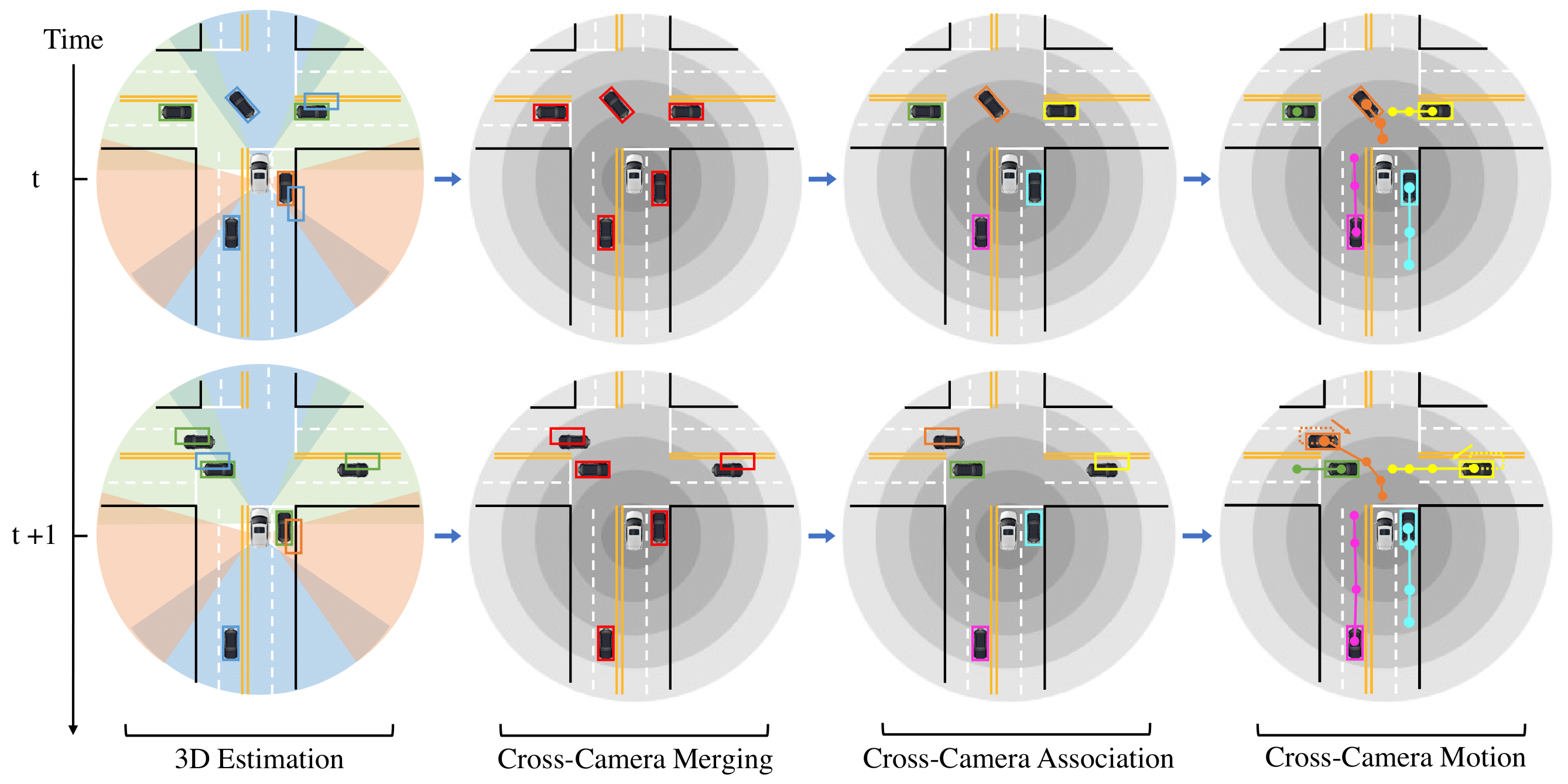

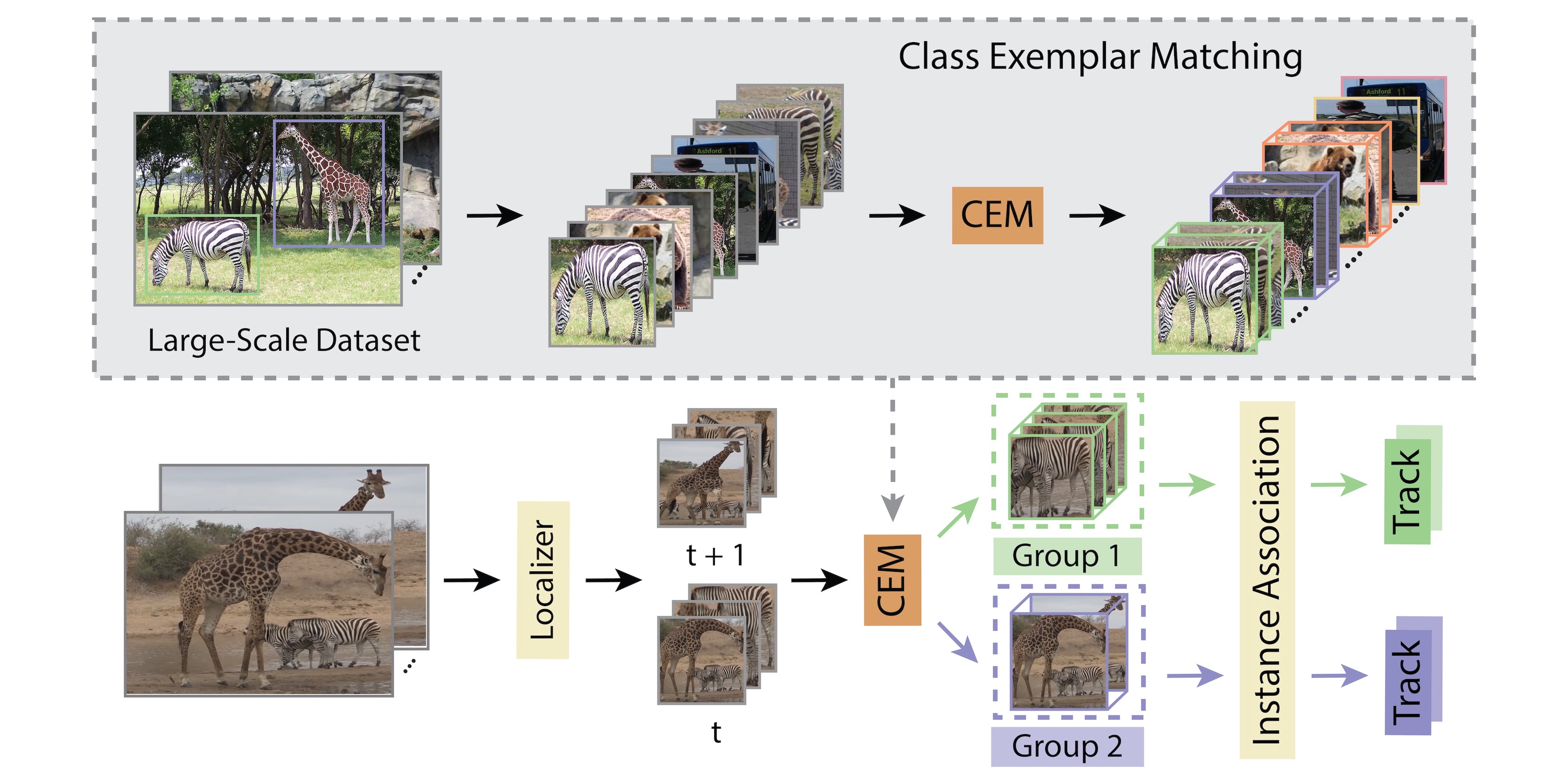

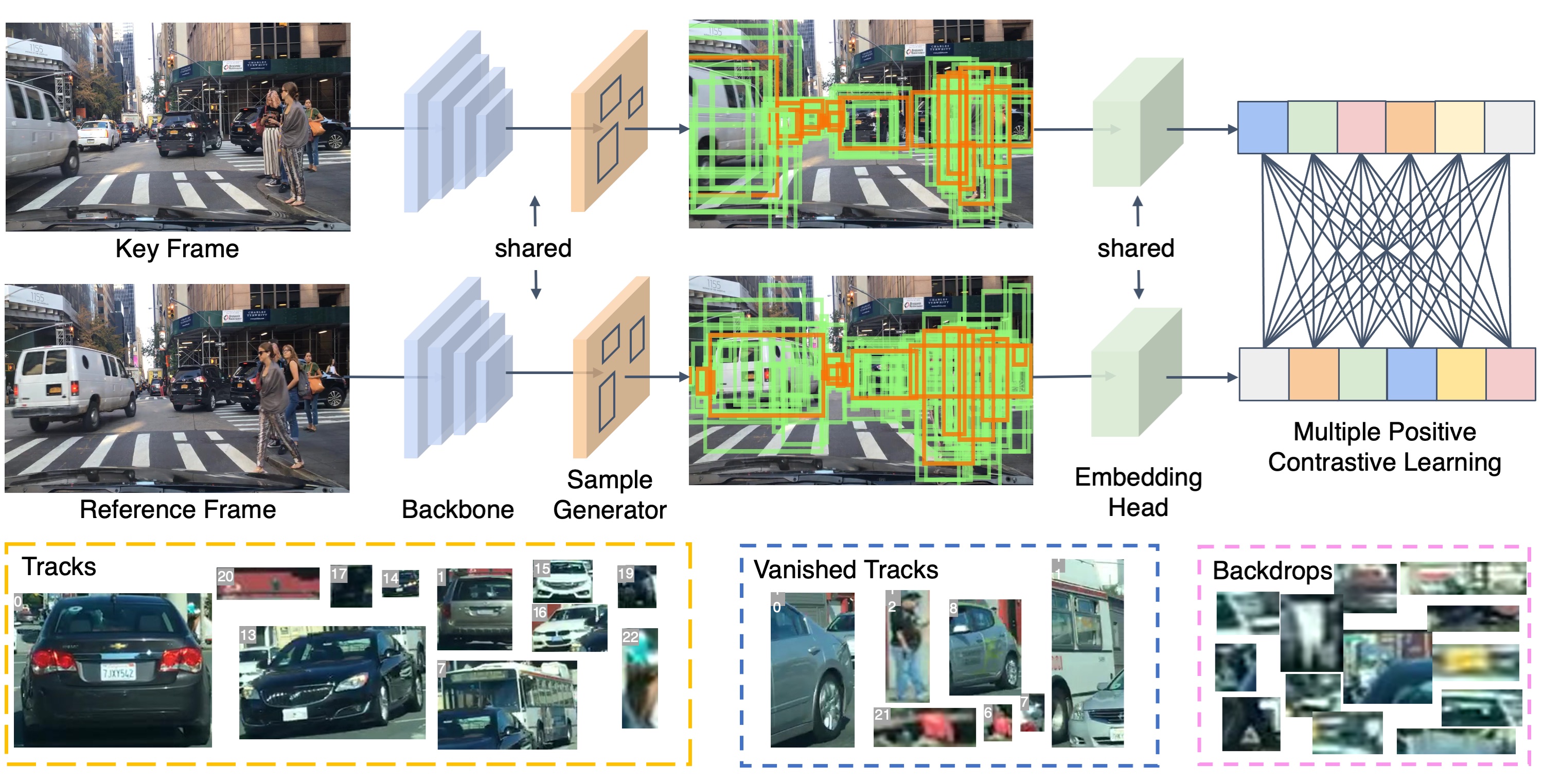

Vehicle 3D extents and trajectories are critical cues for predicting the future location of vehicles and planning future agent ego-motion based on those predictions. In this paper, we propose a novel online framework for 3D vehicle detection and tracking from monocular videos. The framework can not only associate detections of vehicles in motion over time, but also estimate their complete 3D bounding box information from a sequence of 2D images captured on a moving platform. Our method leverages 3D box depth-ordering matching for robust instance association and utilizes 3D trajectory prediction for re-identification of occluded vehicles. We also design a motion learning module based on an LSTM for more accurate long-term motion extrapolation. Our experiments on a simulation dataset and the KITTI tracking dataset show that our 3D tracking pipeline offers robust data association and tracking.

Video

Paper

| Hou-Ning Hu, Qizhi Cai, Dequan Wang, Ji Lin, Min Sun, Philipp Krähenbühl, Trevor Darrell, Fisher Yu Joint Monocular 3D Vehicle Detection and Tracking ICCV 2019 |

Code

github.com/ucbdrive/3d-vehicle-tracking

Citation

@inproceedings{Hu3DT19,

author = {Hu, Hou-Ning and Cai, Qi-Zhi and Wang, Dequan

and Lin, Ji and Sun, Min and Krähenbühl, Philipp and

Darrell, Trevor and Yu, Fisher},

title = {Joint Monocular 3D Vehicle Detection and Tracking},

journal = {ICCV},

year = {2019}

}