*Equal contribution.

Abstract

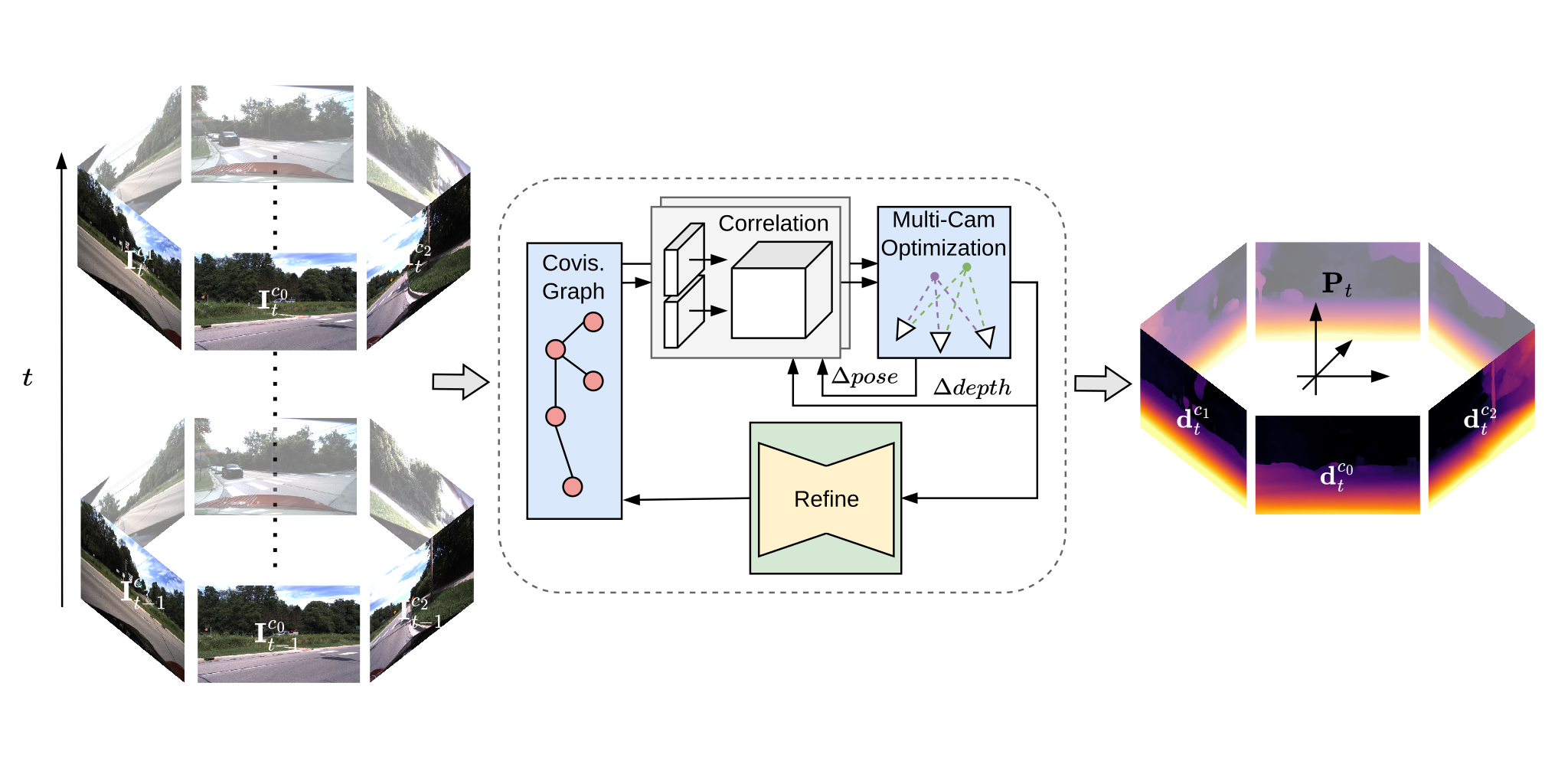

Dense 3D reconstruction and ego-motion estimation are key challenges in autonomous driving and robotics. Compared to the complex, multi-modal systems deployed today, multi-camera systems provide a simpler, low-cost alternative. However, camera-based 3D reconstruction of complex dynamic scenes has proven extremely difficult, as existing solutions often produce incomplete or incoherent results. We propose R3D3, a multi-camera system for dense 3D reconstruction and ego-motion estimation. Our approach iterates between geometric estimation that exploits spatial-temporal information from multiple cameras, and monocular depth refinement. We integrate multi-camera feature correlation and dense bundle adjustment operators that yield robust geometric depth and pose estimates. To improve reconstruction where geometric depth is unreliable, e.g. for moving objects or low-textured regions, we introduce learnable scene priors via a depth refinement network. We show that this design enables a dense, consistent 3D reconstruction of challenging, dynamic outdoor environments. Consequently, we achieve state-of-the-art dense depth prediction on the DDAD and NuScenes benchmarks.

Demonstration Video

Paper

| Aron Schmied*, Tobias Fischer*, Martin Danelljan, Marc Pollefeys, Fisher Yu R3D3: Dense 3D Reconstruction of Dynamic Scenes from Multiple Cameras ICCV 2023 |

Code

github.com/SysCV/r3d3

Citation

@inproceedings{r3d3,

title={R3D3: Dense 3D Reconstruction of Dynamic Scenes from Multiple Cameras},

author={Schmied, Aron and Fischer, Tobias and Danelljan, Martin and Pollefeys, Marc and Yu, Fisher},

booktitle={Proceedings of the IEEE International Conference on Computer Vision},

year={2023}

}