Abstract

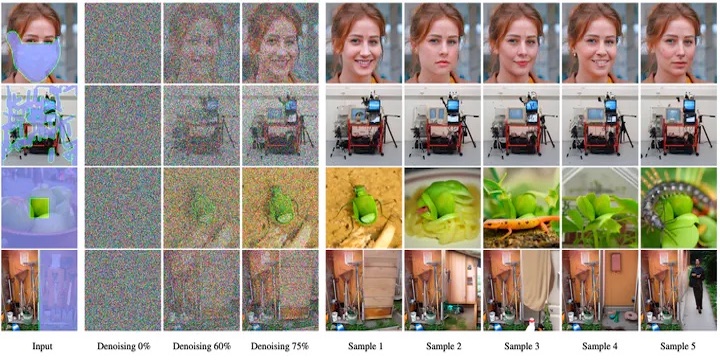

Free-form inpainting is the task of adding new content to an image in the regions specified by an arbitrary binary mask. Most existing approaches train for a certain distribution of masks, which limits their generalization capabilities to unseen mask types. Furthermore, training with pixel-wise and perceptual losses often leads to simple textural extensions towards the missing areas instead of semantically meaningful generation. In this work, we propose RePaint: A Denoising Diffusion Probabilistic Model (DDPM) based inpainting approach that is applicable to even extreme masks. We employ a pretrained unconditional DDPM as the generative prior. To condition the generation process, we only alter the reverse diffusion iterations by sampling the unmasked regions using the given image information. Since this technique does not modify or condition the original DDPM network itself, the model produces high-quality and diverse output images for any inpainting form. We validate our method for both faces and general-purpose image inpainting using standard and extreme masks. RePaint outperforms state-of-the-art Autoregressive, and GAN approaches for at least five out of six mask distributions.

Paper

| Andreas Lugmayr, Martin Danelljan, Andres Romero, Fisher Yu, Radu Timofte, Luc Van Gool RePaint: Inpainting using Denoising Diffusion Probabilistic Models CVPR 2022 |

Code

github.com/andreas128/RePaint

Citation

@inproceedings{lugmayr2022repaint,

author = {Lugmayr, Andreas and Danelljan, Martin and Romero, Andreas and Yu, Fisher and Timofte, Radu and Van Gool, Luc},

title = {{RePaint}: Inpainting using Denoising Diffusion Probabilistic Models},

booktitle = {Computer Vision and Pattern Recognition},

year = {2022}

}